Survey Response Rates

Tips On How To Increase Your Survey Response Rates

Survey Response Rates – Current PeoplePulse Clients:

PeoplePulse recently conducted phone-based research of current PeoplePulse clients to establish the survey response rates that they are receiving for their various research projects. The results are documented below :

| Survey Type | Response RatesWith invite incentive: | Response RatesWith NO invite incentive: |

|---|---|---|

| Post-service Client Survey (short length*) | 55-75% (with 1 follow-up) | 40-60% (with 1 follow-up) |

| Post-service Candidate Survey (short length) | 60-80% | 40-60% (with 1 follow-up) |

| General Client Satisfaction Surveys: (medium length**) | 15-30% (with 1 follow-up) | Less than 10% (with 1 follow-up) |

* Short Length surveys consist of up to 12 questions

** Medium length surveys consist of 12-25 questions

Comments:

(a) Research findings indicate conclusively that with all client surveys, a follow-up / reminder to non-completing recipients after the original invite send date is essential. A follow-up within 10 days after the initial invite is optimal.

(b) Offering an incentive is very important – research results show it will typically lift response rates by 10-15% (depending on the quality and attractiveness of the incentive to your target audience). The consensus amongst many research experts is that ‘useful, relevant information’ is the most effective form of incentive to business audiences.

“… for business-to-business initiatives money (incentives) has never been an effective way to entice survey participation. This is particularly true the higher up in an organization you go. In our modern day economy the real currency is knowledge. Consider offering the respondent a personalized summary of the findings, or access to other research you have executed.” (eg. white papers, salary surveys, skills trends, innovative case studies, etc).

John Towler, a Psychologist and Senior Partner of Creative Organizational Design, also comments:

“Incentives can increase survey response rates dramatically. Our experience has shown that offering a worthwhile incentive can entice up to 50% of the people who would not normally complete the survey, to finish it and send it in. This applies to both paper and pencil surveys and ones that are presented on the Internet.”

A study of Response Rates and Response Quality of Internet-based Surveys by Maastricht University in the Netherlands concluded:

“… vouchers seem to be the most effective incentive in long questionnaires, while prize draws are more efficient in short surveys. A follow-up study revealed that prize draws with small prizes, but a higher chance of winning are most effective in increasing the response rate.”

This finding is supported by the experience of Jonathan Nye (Research Info, 1998):

“We recently finished a strictly web-based research project where we e-mailed the prospective respondents, (who were current customers and users of our client’s software) and asked them to visit the URL where the survey was being hosted. By including the URL in the original e-mail we made it very easy for them to access the survey.

As an incentive our client offered one license of their software package for everyday the survey was online that would be raffled off to 7 lucky respondents.

This was very effective and yielded over a 20% response rate.”

(c) Interestingly, “Post-Placement” surveys to clients asking for feedback on specific recruitment assignments within a week or two of the placement being made yield a far greater response rate than the more general “Client Satisfaction Surveys”. Feedback from other organisations suggests that clients are generally quite receptive to timely requests for feedback regarding a recent assignment via a short survey. However, there seems to be a perception that “Client Satisfaction Surveys” are longer to complete and that results will perhaps get ‘lost’ in the sea of responses.

PeoplePulse’s research of other non-recruitment clients conducting online client satisfaction surveys confirms this – a typical response rate is between 10 and 20% with incentives and follow-up reminders.

CustomInsight, a US company that designs and administers surveys offered the following comments regarding the link between response rates and survey types:

“Response rates vary widely for different types of surveys. Customer satisfaction surveys and market research surveys often have response rates in the 10% – 30% range. Employee surveys typically have a response rate of 25% – 60%. Regardless of the type of survey you are conducting, you can have a major effect on the number of respondents who complete your survey.”

(d) Post-placement surveys sent to successfully placed candidates typically yield excellent response rates for current PeoplePulse clients.

(e) Research has shown that personalisation of e-mailed survey invites can lift response rates by 7% or more. Dirk Heerwegh’s 2005 study into personalised invites for online surveys (eg. ‘Dear John’ as opposed to ‘Dear Customer’) covered more than 2,500 survey respondents and concluded that personalised survey invites increased response rates by 7.8 percentage points. In addition, respondents that received personalised invites were 2.6% less likely to drop off before completing all survey questions.

As with traditional paper or phone research methods, the rate of response for online surveys varies according to a range of factors such as: the target audience being surveyed, the nature of the survey content, the perceived value of incentive being offered, the day of week and time of day the survey takes place, the level of personalisation, etc.

The following information regarding response rates is drawn from 199 online surveys conducted in the US with a total of 523,790 invitations sent to potential respondents.

(a) Online Survey Response Rates

Median survey response rate: 26.45%

Average survey response rate (sample size of less than 1,000 recipients): 41.21%

- Response rates vary greatly depending on the target audience and the nature of the research. The average combined response rates for all survey types is 26% (with incentives and follow ups).

- Large invitation lists are associated with lower response rates. It is important to use as focused and high-quality e-mail list as possible (especially when acquiring your sample on a paid basis.

(b) Online Survey Response Times

Over half of online survey responses are likely to arrive in the first day.

Seven out of eight responses arrive within the first week

We recommend at least 2 weeks as a run time for surveys in which it is important to get a full response. This is especially true for firm-wide employee surveys, where employees may be on 2 week vacations.

(c) Online Survey Responses and the Time of Day

Response rates and times are best for surveys sent out between 6:00AM and 9:00 AM, at the beginning of the work day – but not on Monday morning.

Though response times are quicker in the evenings, response rates are low.

Business related surveys to be sent after 3:00 PM should wait until the next business day.

(d) Survey Length

The length of the survey is seen to have a negative influence on mail survey response rates in that the longer the survey, the more likely it is that the response rate will be lower (Herberlien & Baumgartner, 1978; Steele, Schwendig & Kilpatrick, 1992; Yammarino, Skinner & Childers, 1991). Recent studies have also indicated that respondents in business-oriented studies were more sensitive than consumers to survey length (Jobber & Saunders, 1993) and that survey length was one of the main reasons for business persons’ non-response (Tomasokovic-Devey, et al., 1994).

(e) Issue Relevance

Salience of an issue to the sampled population has been found to have a strong positive correlation with response rate for postal and Internet-based surveys (Sheehan & McMillan, 1999; Watt, 1999). Salience has been defined as the association of importance and/or timeliness with a specific topic (Martin, 1994). For example, a survey on homeowner taxes would likely be more salient to a population of homeowners than a population of college students.

They noted that “if a person attaches little interest or importance to the particular content of a survey, then it will not matter if the survey form is short; the person still is unlikely to respond.”

(f) Which incentive performs better? A $2,500 sweepstake OR a $2 cash reward for everyone?

In a study conducted by e-Rewards Market Research, two random sample selections utilised: 4,000 people were invited to complete the survey for entry into a sweepstakes drawing of $2,500, and another 4,000 people were invited to complete the survey for $2.00 in cash. Both groups launched and closed on the same day of the week and the same time of the day. It was a one-minute survey about books and music.

The results: Within 7 days after sending the invite, response rates were:

- 19.3% for $2 cash ‘pay all’ sample.

- 12.2% for $2500 sweepstake sample.Source: Kurt Knapton, Executive Vice President, e-Rewards Market Research

Source: Kurt Knapton, Executive Vice President, e-Rewards Market Research

| Case Study One – Online Surveys Lessons Learned by IBM |

|---|

|

IBM’s e-business Innovation Centre in Toronto ran an online business-to-business survey between 2000 and 2001, and after tweaking a number of variables they managed to double their response rates in the second year of running the survey. Here are some of the lessons they learnt:

|

| Case Study Two – E-mail Subject Line Testing |

|---|

|

In this example, the target opt-in list was broken up into three randomly selected groups. Three different emails were sent containing the same body text but different subject lines.Here are the headlines and results: Subject Line 1: “FIRSTNAME, November Client Attraction Newsletter out now” Subject Line 2: “FIRSTNAME, here’s a new 7 Marketing Trends report for you” Subject Line 3: “FIRSTNAME, 7 Marketing Trends I think you should know about” The winning headline outperformed the second best headline by 63% and the worst headline by 125%! Making this type of split test an integral part of your online marketing process allows you to ramp up your results by orders of magnitude on an ongoing basis. |

SIDEBAR: Two factors that can influence the effectiveness of email subject lines are Personalisation and Proximity.

Personalisation: “FIRSTNAME, how to improve your profits” will perform better than “How to improve your profits”.

Proximity: Proximity means writing your subject lines such that they convey your message as quickly as possible (preferably in the first 4 or 5 words). Most email clients [eg. MS Outlook] only display the first few words of a subject line, so it pays to make them count. Subject Line 3 above communicates the most information the fastest, which is why I believe it performed best.

Source: Will Swayne’s blog – http://www.marketing-results.com.au/blog

Further reading

Extract from HostedWire.com

“What survey response rate can I expect?”

Survey response rates vary widely and depend on a variety of factors. It is difficult to predict the level of survey participation you will receive, but with understanding of certain factors that influence response rates, you may be able to determine approximately, or even increase your response rate.

Response rates can be influenced by factors such as customer loyalty, brand recognition, incentives (ranging from honorarium monetary payments and prizes to published survey results), invitation wording (how well you pitch your survey to potential participants), marketing of survey, perceived benefit from participating in survey, customer demographics, how actively customers or employees are engaged in the improvement process, and other things.

An important incentive to survey respondents is that their opinions will be heard and action will be taken based on their feedback. If respondents believe that participating in a survey will result in real improvements, response rates may increase, as will the quality of the feedback.

Response rates can soar past 85% (about 43 responses for every 50 invitations sent) when the respondent population is motivated and the survey is well-executed. Response rates can also fall below 2% (about 1 response for every 50 invitations sent) when the respondent population is less-targeted, when contact information is unreliable, or where there is less incentive or little motivation to respond.

Internal Survey Response Rates

Internal surveys (i.e. a company surveying its employees) generally have a much higher response rate than external surveys (e.g. surveys aimed at customers or people outside of an organisation).

Internal surveys will generally receive a 30-40% response rate or more on average, compared to an average 10-15% response rate for external surveys. Response rates may be even higher if employees feel comfortable with the survey environment and feel that their anonymity is protected.

Useful Nuggets

- Upfront Incentives Work:

One study on survey incentives showed that mailing a $5 “gift” cheque along with the questionnaire was twice as effective as offering $50 paid after the completed survey was received by the researcher.

-James & Bolstien, 1992.

- Subject Lines: Keep Them Short and Sweet:

A recent study done by email monitoring company Return Path showed that, “subject lines with 49 or fewer characters had open rates 12.5 percent higher than for those with 50 or more,” and that, “click-through rates for subject lines with 49 or fewer characters were 75 percent higher than for those with 50 or more.”

- Survey Completion Times – How long after sending your survey can you expect responses?

-50% of completed surveys received within 12 hours of online survey launch,

-65% received within 24 hours of online survey launch,

-80% within 48 hours of online survey launch,

-90% within 3 days of online survey launch.

Source: Bruce E Segal

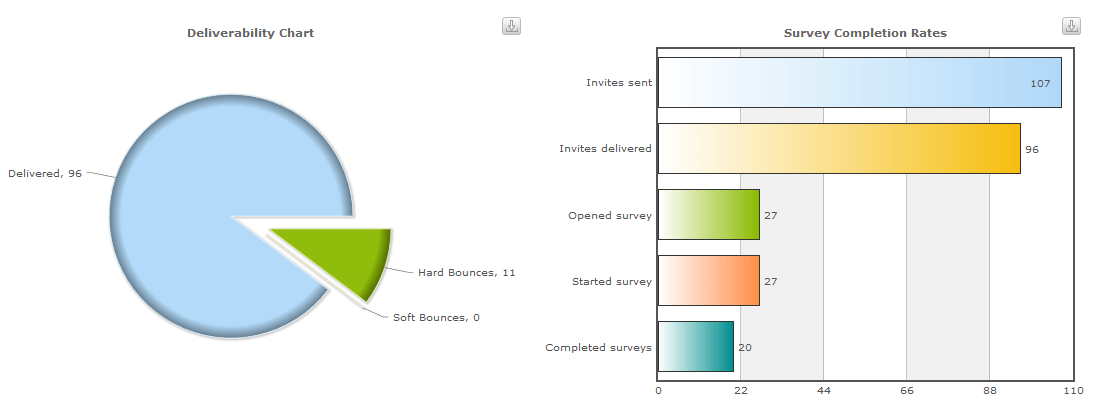

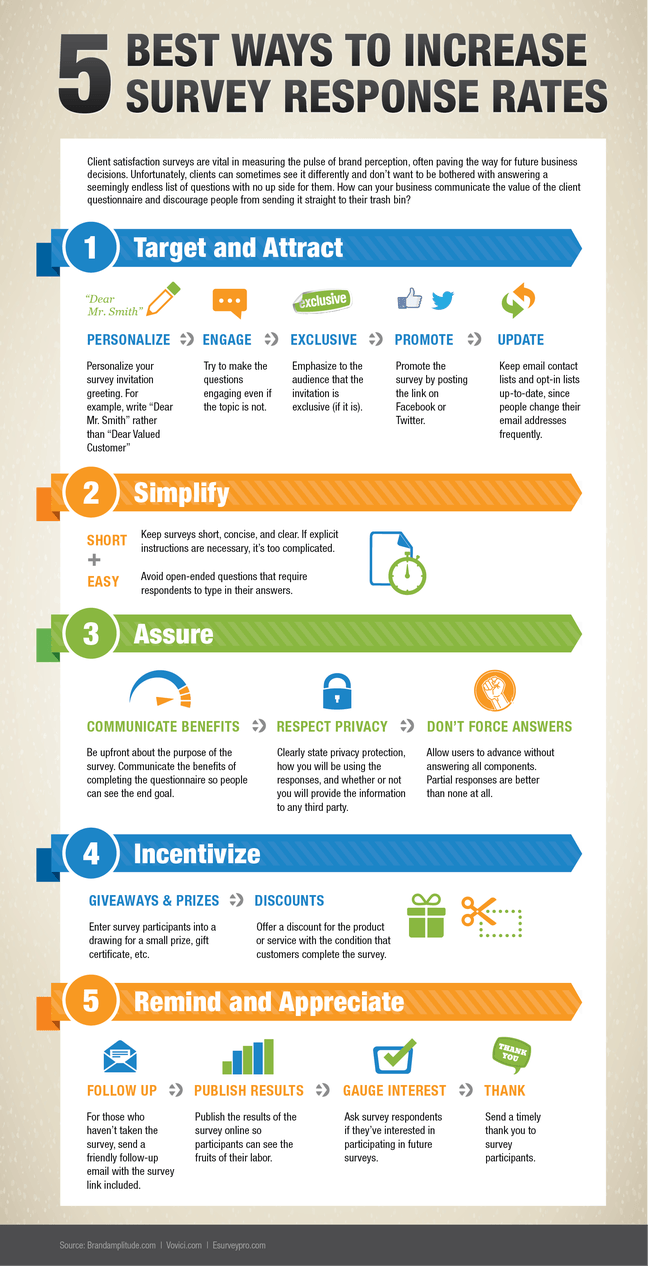

Survey Response Rate Infographic

Why not have a look at PeoplePulse today?

Contact PeoplePulse today on ph +61 2 9232 0172 to discuss how we may be able to help you with your survey requirements. We have a fantastic real time response rate report – so make sure you ask to take a look!

Or if you are interested in a live demonstration of full featured online survey software offered by a full service provider, please request a demo.

Next Articles:

NPS – Net Promoter Score®: The Complete Guide

Tips for Conducting More Effective Staff Surveys

Don’t miss out:

Sign up for our weekly newsletter MobileMatters (mobile research best practice) using the form at the bottom right of this page.

Net Promoter, Net Promoter System, Net Promoter Score, NPS and the NPS-related emoticons are registered trademarks of Bain & Company, Inc., Fred Reichheld and Satmetrix Systems, Inc.

Exceptional Survey. Solutions

Exceptional Survey. Solutions